After a 48-hour ban, Claude reached the top of the App Store

On Saturday morning, Ultraman retweeted a screenshot of an internal memo on X.

The memo was one he had written to OpenAI employees on Thursday night, stating that the company was in talks with the Pentagon, and he hoped to help "de-escalate the situation." He retweeted the memo with a few lines of commentary, essentially wanting to publicly explain what had been happening these past few days.

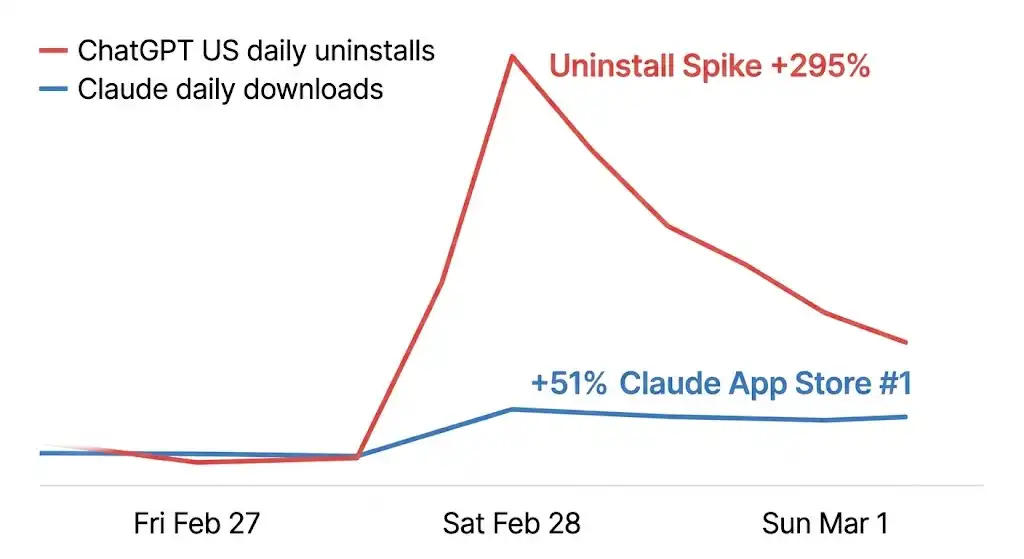

At the time of his tweet, Claude had already climbed to the number one spot on the U.S. App Store's free chart. Just the day before, ChatGPT was still sitting in that position.

Sensor Tower's data captured what unfolded in the hours that followed: on that Saturday alone, ChatGPT's uninstall rate in the U.S. surged by 295% compared to typical days, 1-star reviews skyrocketed by 775%. Meanwhile, Claude's daily downloads rose by 51%. A wave of "Cancel ChatGPT" posts emerged on Reddit, users shared screenshots of their unsubscriptions, with some writing "fastest install of my life" in the comments. A website called QuitGPT.org went live, claiming that 1.5 million people had taken action.

On Monday, the influx of users was so high that Claude experienced a massive outage. The company flagged as a "supply chain security risk" faced server overload due to the overwhelming number of users.

Precision Product Counterattack

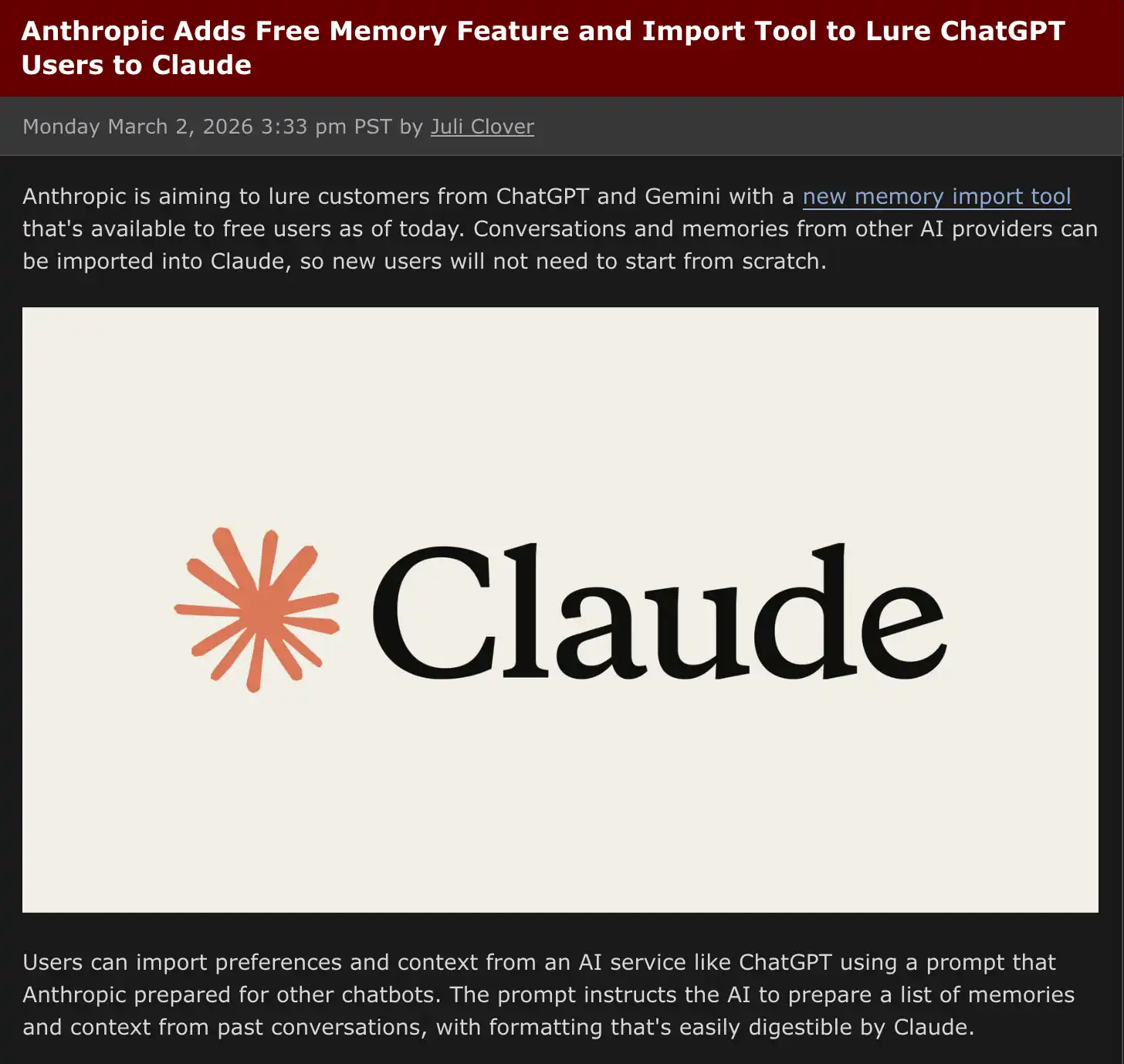

On the same day the uninstall wave was brewing, Anthropic launched a memory migration tool.

The functionality itself wasn't complex. Users would copy a prompt into ChatGPT to have it output all stored memories and preferences, then paste it into Claude, allowing Claude to seamlessly continue from where you left off on ChatGPT. The official website slogan was just one line: "switch to Claude without starting over."

The timing of this tool was its most critical attribute.

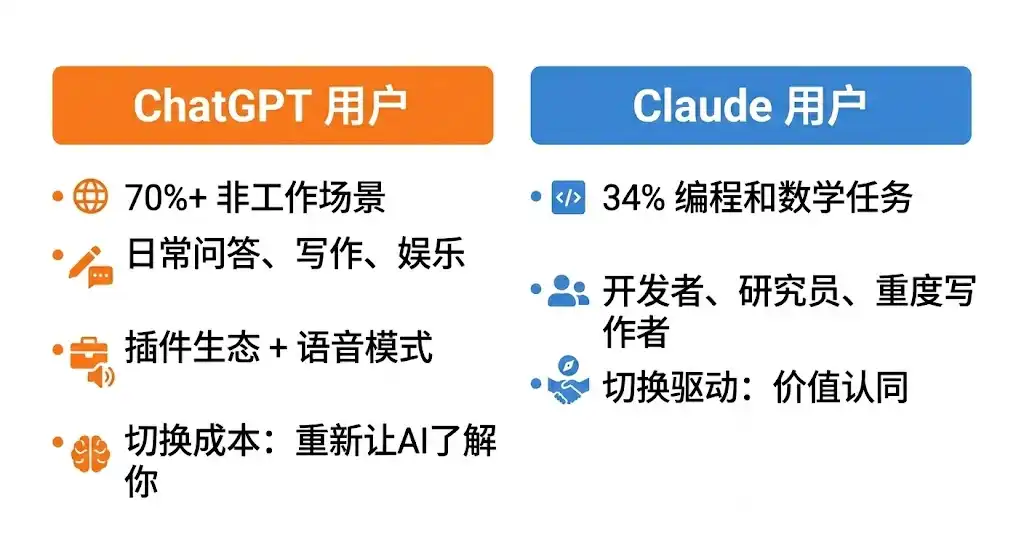

OpenAI's own data showed that by mid-2025, over 70% of ChatGPT users' use cases were non-work-related, encompassing daily Q&A, writing, entertainment, and information retrieval. It was many people's first encounter with AI, relying on a vast plugin ecosystem, Voice Mode, and deep integration with third-party apps embedded in daily life. The switching cost for these users was not just "downloading a new app," but rather reintroducing an AI that didn't know you and starting from scratch. The accumulation of memories was the most compelling reason to stay.

Anthropic's own research data shows that Claude's usage is highly concentrated. Programming and mathematical tasks account for 34%, the single largest category, while education and research have been the fastest-growing areas in the past year. The core users are developers, researchers, and heavy writers, who are more rational and more likely to switch tools based on a clear value judgment, as long as the migration cost is low enough.

The memory migration tool has minimized this cost. At the same time, Anthropic announced that the memory feature will be fully open to free users, a feature that was previously exclusive to paid users.

However, a significant portion of these incoming users are not the original target users of Claude.

From the feedback on social media, a large number of regular users migrating from ChatGPT often have a first-time reaction to Claude: "It's different." Some feel that Claude's responses are deeper and push back proactively rather than just agreeing. Some find it cleaner in writing but lacking in image generation, and it does not have an interactive experience like Voice Mode.

Some initially wanted to find a "more obedient ChatGPT alternative" but discovered that Claude has a stronger personality, requiring time to adapt. A migration guide from TechRadar has been widely shared these days, titled "I Wish Someone Told Me These Things," with the core message of the article being that the usage logic of Claude and ChatGPT is fundamentally different: the former is more like an opinionated work partner, while the latter is more like a general-purpose assistant.

This difference was originally the positioning of the two products, but it was inadvertently magnified by this event. Users rushed into Claude due to ethical considerations, only to discover a product that was different from their expectations, a more discerning, more boundary-aware AI. This difference could have been a reason for churn, but at this particular juncture, it has become a reason to stay: if you believe in a company's stance, you are more likely to accept the logic of its product.

Days after the launch, Anthropic released data: active free users grew by over 60% compared to January, and daily new registrations quadrupled. Claude briefly crashed due to excessive traffic, with thousands of users reporting login issues, which were resolved within hours.

The Three Words in the Contract: What OpenAI Said and Did

Anthropic is the first company to deploy AI models to a classified U.S. military network, a partnership completed through Palantir, with a contract value of approximately $200 million. However, over the past few months, the relationship between the two parties has continued to deteriorate. The core of the dispute is a clause: the Pentagon requires the AI model to be open for "all lawful purposes" without any conditions. Anthropic insists on including two exceptions in the contract: it cannot be used for mass surveillance of U.S. citizens and cannot be used for fully autonomous weapon systems.

Around February 20, it was reported that an Anthropic executive questioned Palantir about Claude's usage during the January US military operation to capture Venezuelan President Maduro. The military was strongly displeased. On Thursday, the Pentagon issued an ultimatum, demanding a response from Dario Amodei by 5 p.m. that day.

Amodei made a statement before the deadline, stating that the company could not accept the current terms, "not because we are against military use, but because in rare cases, we believe AI could undermine rather than uphold democratic values." Trump promptly announced a full six-month federal agency ban on Anthropic products, with Hegseth labeling it a "supply chain security risk," a tag typically used for foreign adversary companies. The contract was thus terminated.

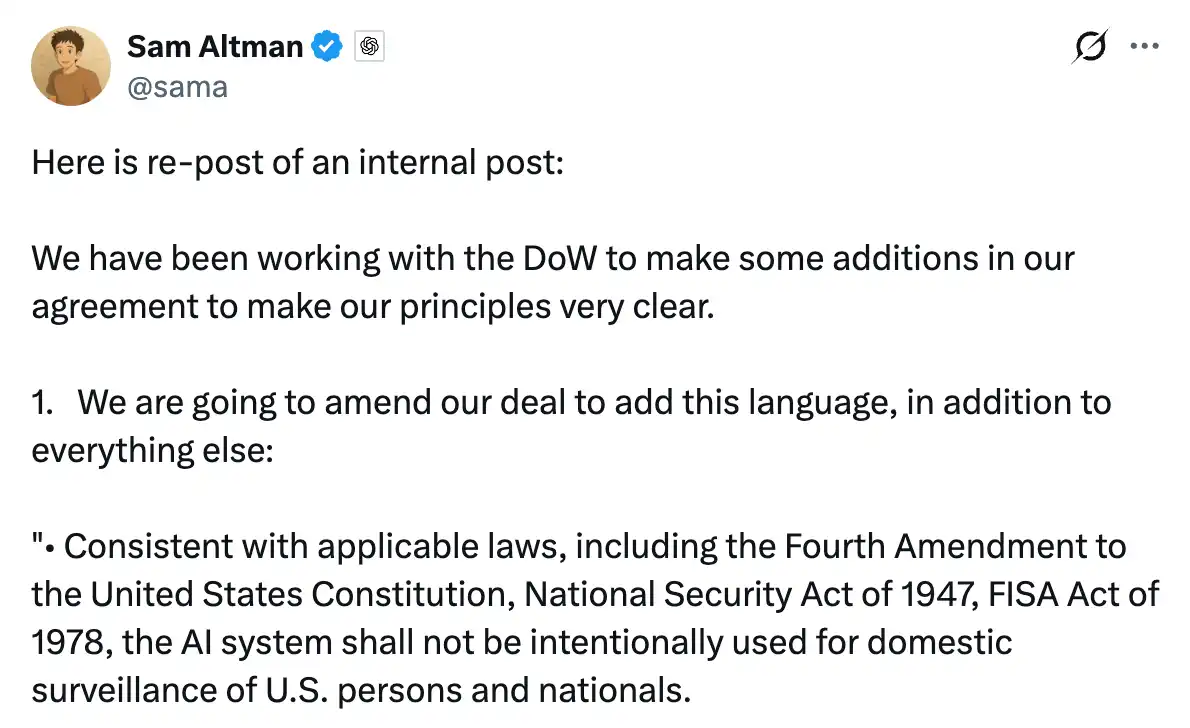

The vacant position was quickly filled. Later that same day, OpenAI announced a contract with the Pentagon. In a Thursday internal memo, Ultraman made his position clear, writing that this was already a "industry-wide issue," stating that OpenAI and Anthropic held the same "red lines": opposition to mass surveillance and autonomous weapons. On Friday, an agreement was reached to deploy models on military classified networks, restrict them to run only in the cloud, station engineers for oversight, and it was stated that the same two restrictions were included in the contract.

Ultraman then held an open Q&A on X, answering questions for several hours. When asked why the Pentagon accepted OpenAI but banned Anthropic, his response was, "Anthropic seemed more focused on specific prohibitions in the contract rather than referencing applicable laws, and we were satisfied with referencing the law."

This statement speaks to methodological differences, but it opened up the real controversy of the matter.

The key point of contention in Anthropic's breakdown in talks was the phrase the Pentagon insisted on including: AI systems can be used for "all lawful purposes." The reason Anthropic refused was that this phrase is not a fixed boundary in a national security context. Current laws have not fully caught up with AI capabilities, and the "lawful" scope will be determined by the government's interpretation. OpenAI agreed to this phrase while claiming to discuss the same protections in the contract.

Legal experts subsequently analyzed the contract terms publicly disclosed by OpenAI, pointing out two specific wording issues.

The monitoring clause states that the system shall not be used for unconstrained monitoring of U.S. citizens' private information. Samir Jain, Vice President of Policy at the Center for Democracy and Technology, pointed out that the wording implies that a constrained monitoring version is allowed. Under current legal frameworks, the government can legally purchase citizens' location records, browsing history, and financial data from data brokers for AI analysis without technically constituting "unlawful surveillance." Amodei cited this very example in a subsequent interview with CBS.

The weapons clause states that the system shall not be used for autonomous weapons in situations requiring human control by law, regulation, or department policy. This qualifier means that the restriction only applies if human control is already mandated by other regulations, with the constraint being wholly dependent on existing policies. The Pentagon reserves the right to modify its internal policies at any time. Legal scholar Charles Bullock wrote on X that the weapons clause in the contract relies on DoD Directive 3000.09, which requires commanders to retain "appropriate levels of human judgment," and this "appropriateness" is a standard open to flexible interpretation.

OpenAI's response to these concerns is: the model can only run in the cloud, which architecturally precludes direct integration into weapon systems. The contract also specifies the specific legal basis, which carries more weight than purely prohibitive clauses because the law is a vetted framework. Ottman himself also acknowledged in a Q&A, "If we need to fight this war in the future, we will, but obviously, this exposes us to some risks."

This is not merely a matter of one company willing to compromise and another sticking to principles; this is a clash of fundamentally different security philosophies. OpenAI's bottom line is: I won't do anything illegal. Anthropic's bottom line is: Even if the law hasn't caught up yet, if I believe something shouldn't be done, I won't do it either.

This divergence has also created internal divisions at OpenAI. Last week, several OpenAI employees signed an open letter supporting Anthropic's stance and opposing its categorization as a supply chain risk. Researcher Leo Gao openly questioned whether the company's contracts provided sufficient protection. Chalk graffiti criticizing the company appeared on the sidewalk outside OpenAI's San Francisco office. In contrast, supportive messages were left outside Anthropic's office. Ottman's extensive Q&A session largely targeted the group of employees within his own company who originally sided with Anthropic.

Two Outcomes of the Same Narrative

For years, Anthropic has framed its security mission around "preventing civilization-level risks," equating the potential threat of cutting-edge AI with that of nuclear weapons and positioning itself as a gatekeeper on this frontline. This narrative is at the core of its brand and is how it has gained trust in the capital markets.

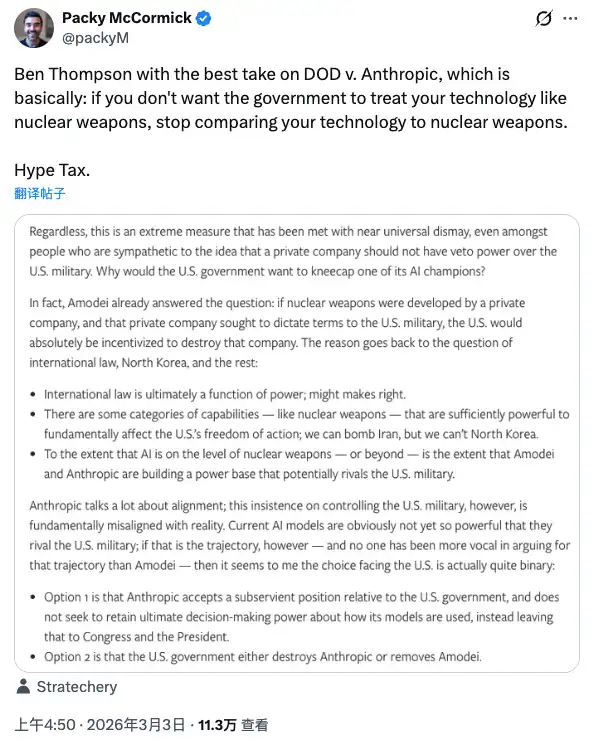

Tech commentator Packy McCormick, during this brewing saga, cited Ben Thompson's concept: Hype Tax. The idea is that if you use extreme storytelling to build your influence, then when this narrative encounters real power, you will have to pay for it. You liken AI technology to nuclear weapons, and the government will treat you as it does nuclear weapons.

Anthropic paid the price for this narrative: lost a contract, was listed as a security risk, was named by the President, and all its products were required to be removed from federal systems within six months.

But in the same weekend, the same narrative had a completely opposite effect in another dimension.

What ordinary users saw was not contract terms, not legal explanations, not debates on security philosophy. What they saw was: one company said no, got kicked out by the government. Another company said yes, got the contract. They made their choice using their own judgment framework, 295% uninstall rate, #1 on the App Store, servers crashing.

This was a rare collective consumer moral stand in the history of the AI industry.

Anthropic did not spend a penny of its PR budget on this matter. Amodei's statement was restrained in tone, no appeal for user support, no mention of OpenAI, no portrayal of themselves as martyrs. But the result happened.

There is a notable detail here: the event that caused users to flock to Claude, in essence, was OpenAI doing something entirely reasonable in business, signing an agreement when competitors were banned and contracts were pending, and claiming to have discussed the same protection terms. Ultraman also made it clear that he did this in part to help calm the situation and prevent further harm to Anthropic.

Regardless of the motive, the result is that OpenAI got the contract, and Anthropic's user base grew. Both sides incurred costs, both sides gained benefits, just in different units of measure.

There is one more thing worth mentioning here.

The Pentagon contract that Anthropic lost was worth about $200 million.

Anthropic's current annual revenue is $14 billion. The goal is to reach $26 billion by 2026.

Anthropic just completed a $30 billion Series E funding round last month, with a valuation of $380 billion.

Doing the math on this deal is now easy. But there is another unanswered question: when AI is truly used at scale for military decision-making, will those "tech firewalls" written into contracts and the deployed engineers actually hold up, whether from OpenAI or the ones originally requested by Anthropic.

This question is not in any publicly disclosed contract.

You may also like

Consumer-grade Crypto Global Survey: Users, Revenue, and Track Distribution

Prediction Markets Under Bias

Stolen: $290 million, Three Parties Refusing to Acknowledge, Who Should Foot the Bill for the KelpDAO Incident Resolution?

ASTEROID Pumped 10,000x in Three Days, Is Meme Season Back on Ethereum?

ChainCatcher Hong Kong Themed Forum Highlights: Decoding the Growth Engine Under the Integration of Crypto Assets and Smart Economy

Why can this institution still grow by 150% when the scale of leading crypto VCs has shrunk significantly?

Anthropic's $1 trillion, compared to DeepSeek's $100 billion

Geopolitical Risk Persists, Is Bitcoin Becoming a Key Barometer?

Annualized 11.5%, Wall Street Buzzing: Is MicroStrategy's STRC Bitcoin's Savior or Destroyer?

An Obscure Open Source AI Tool Alerted on Kelp DAO's $292 million Bug 12 Days Ago

Mixin has launched USTD-margined perpetual contracts, bringing derivative trading into the chat scene.

The privacy-focused crypto wallet Mixin announced today the launch of its U-based perpetual contract (a derivative priced in USDT). Unlike traditional exchanges, Mixin has taken a new approach by "liberating" derivative trading from isolated matching engines and embedding it into the instant messaging environment.

Users can directly open positions within the app with leverage of up to 200x, while sharing positions, discussing strategies, and copy trading within private communities. Trading, social interaction, and asset management are integrated into the same interface.

Based on its non-custodial architecture, Mixin has eliminated friction from the traditional onboarding process, allowing users to participate in perpetual contract trading without identity verification.

The trading process has been streamlined into five steps:

· Choose the trading asset

· Select long or short

· Input position size and leverage

· Confirm order details

· Confirm and open the position

The interface provides real-time visualization of price, position, and profit and loss (PnL), allowing users to complete trades without switching between multiple modules.

Mixin has directly integrated social features into the derivative trading environment. Users can create private trading communities and interact around real-time positions:

· End-to-end encrypted private groups supporting up to 1024 members

· End-to-end encrypted voice communication

· One-click position sharing

· One-click trade copying

On the execution side, Mixin aggregates liquidity from multiple sources and accesses decentralized protocol and external market liquidity through a unified trading interface.

By combining social interaction with trade execution, Mixin enables users to collaborate, share, and execute trading strategies instantly within the same environment.

Mixin has also introduced a referral incentive system based on trading behavior:

· Users can join with an invite code

· Up to 60% of trading fees as referral rewards

· Incentive mechanism designed for long-term, sustainable earnings

This model aims to drive user-driven network expansion and organic growth.

Mixin's derivative transactions are built on top of its existing self-custody wallet infrastructure, with core features including:

· Separation of transaction account and asset storage

· User full control over assets

· Platform does not custody user funds

· Built-in privacy mechanisms to reduce data exposure

The system aims to strike a balance between transaction efficiency, asset security, and privacy protection.

Against the background of perpetual contracts becoming a mainstream trading tool, Mixin is exploring a different development direction by lowering barriers, enhancing social and privacy attributes.

The platform does not only view transactions as execution actions but positions them as a networked activity: transactions have social attributes, strategies can be shared, and relationships between individuals also become part of the financial system.

Mixin's design is based on a user-initiated, user-controlled model. The platform neither custodies assets nor executes transactions on behalf of users.

This model aligns with a statement issued by the U.S. Securities and Exchange Commission (SEC) on April 13, 2026, titled "Staff Statement on Whether Partial User Interface Used in Preparing Cryptocurrency Securities Transactions May Require Broker-Dealer Registration."

The statement indicates that, under the premise where transactions are entirely initiated and controlled by users, non-custodial service providers that offer neutral interfaces may not need to register as broker-dealers or exchanges.

Mixin is a decentralized, self-custodial privacy wallet designed to provide secure and efficient digital asset management services.

Its core capabilities include:

· Aggregation: integrating multi-chain assets and routing between different transaction paths to simplify user operations

· High liquidity access: connecting to various liquidity sources, including decentralized protocols and external markets

· Decentralization: achieving full user control over assets without relying on custodial intermediaries

· Privacy protection: safeguarding assets and data through MPC, CryptoNote, and end-to-end encrypted communication

Mixin has been in operation for over 8 years, supporting over 40 blockchains and more than 10,000 assets, with a global user base exceeding 10 million and an on-chain self-custodied asset scale of over $1 billion.

$600 million stolen in 20 days, ushering in the era of AI hackers in the crypto world

Vitalik's 2026 Hong Kong Web3 Summit Speech: Ethereum's Ultimate Vision as the "World Computer" and Future Roadmap

On the same day Aave introduced rsETH, why did Spark decide to exit?

Full Post-Mortem of the KelpDAO Incident: Why Did Aave, Which Was Not Compromised, End Up in Crisis Situation?

After a $290 million DeFi liquidation, is the security promise still there?

ZachXBT's post ignites RAVE nearing zero, what is the truth behind the insider control?