Sam Altman's Twenty-Four Hours: The Pentagon said "no" twice, but only one was serious

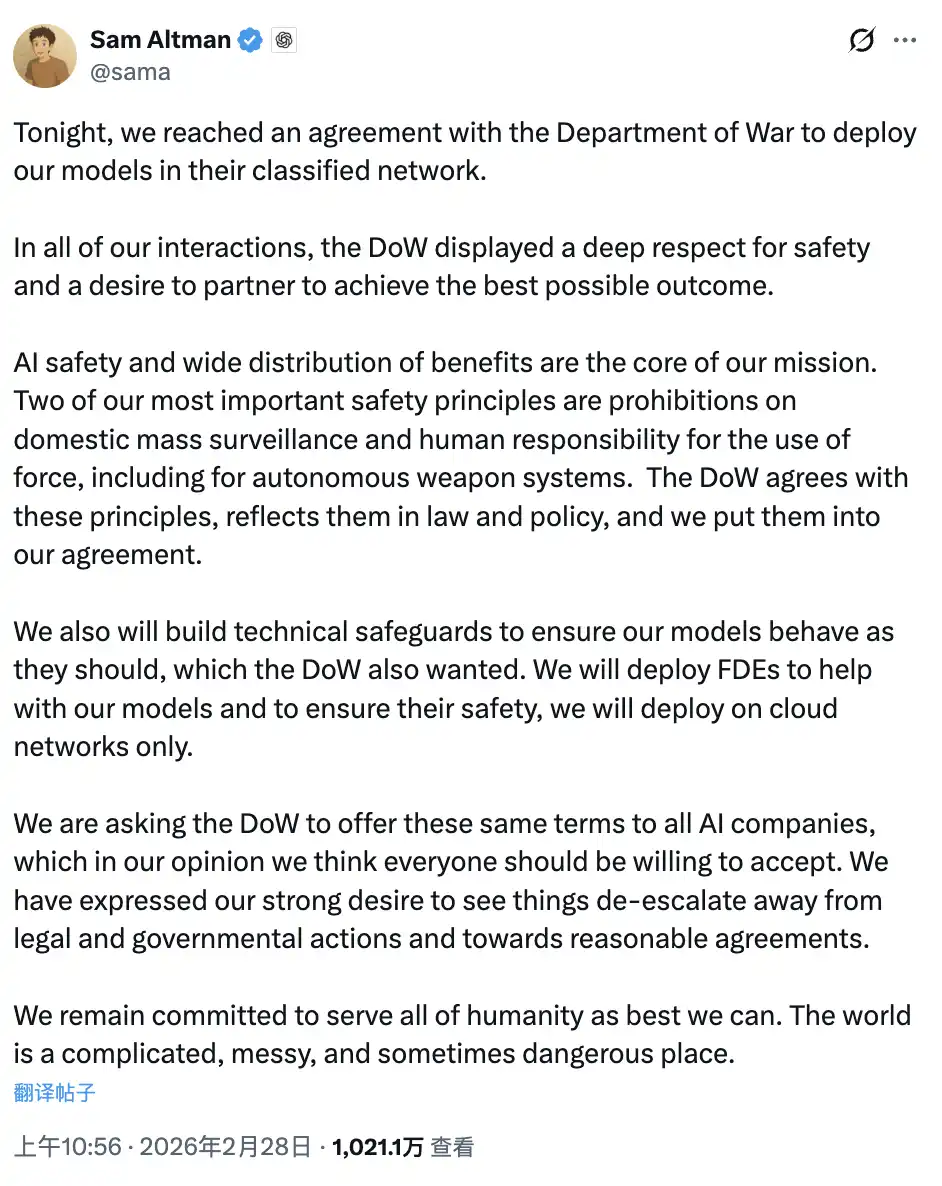

On the morning of February 28th Beijing time, Sam Altman tweeted: "Tonight, we reached an agreement with the U.S. Army to deploy our model into their classified network."

Rewind about twelve hours to the evening of February 27th Beijing time. It was still him, sitting in front of the CNBC Squawk Box camera, calmly saying: "For Anthropic, despite many disagreements between us, I fundamentally trust this company. I believe they truly care about security." He also said: "I don't think the Pentagon should be using the Defense Production Act to threaten these companies."

In less than twelve hours, from the same mouth, two different statements. What happened in between is worth explaining.

Two Similar Terms, Two Different Outcomes

Let's first put the core content of the two contracts side by side.

Anthropic's request: Claude shall not be used for mass surveillance of U.S. citizens, shall not be used for autonomous weapons systems without human intervention.

When Altman announced the agreement in his tweet, he cited two identical principles: "We have long believed that AI should not be used for mass surveillance or autonomous lethal weapons, and humans should always be present in high-risk automated decision-making." He also wrote: "The Pentagon has accepted these principles, will reflect them in law and policy, and we have written them into the contract."

The wording is almost identical.

One company was banned, labeled as a "supply chain risk," and personally called a "woke far-left company" by Trump on Truth Social. The other company received a contract, entered the Pentagon's classified network, and Sam Altman used the phrase "reached an agreement" in his tweet—calm, business-like, as if a routine B2B transaction had been completed.

This is the question the entire article aims to answer: with similar terms, why are the outcomes completely different?

The answer is not in the terms but in the logic behind the terms.

There is a key background fact worth clarifying first: Anthropic is the only one of these four companies (the other three being OpenAI, Google, xAI) permitted to have AI access to the Pentagon's classified network. OpenAI's original contract only covered non-classified daily office scenarios. This negotiation was essentially about OpenAI wanting access to the classified network, and the Pentagon's condition for entry was the contentious "for all lawful purposes" clause. Anthropic was already inside, but the Pentagon demanded that the security gate it had put up when entering be taken down.

The Pentagon Doesn't Care What Is Written; It Cares Who Said It

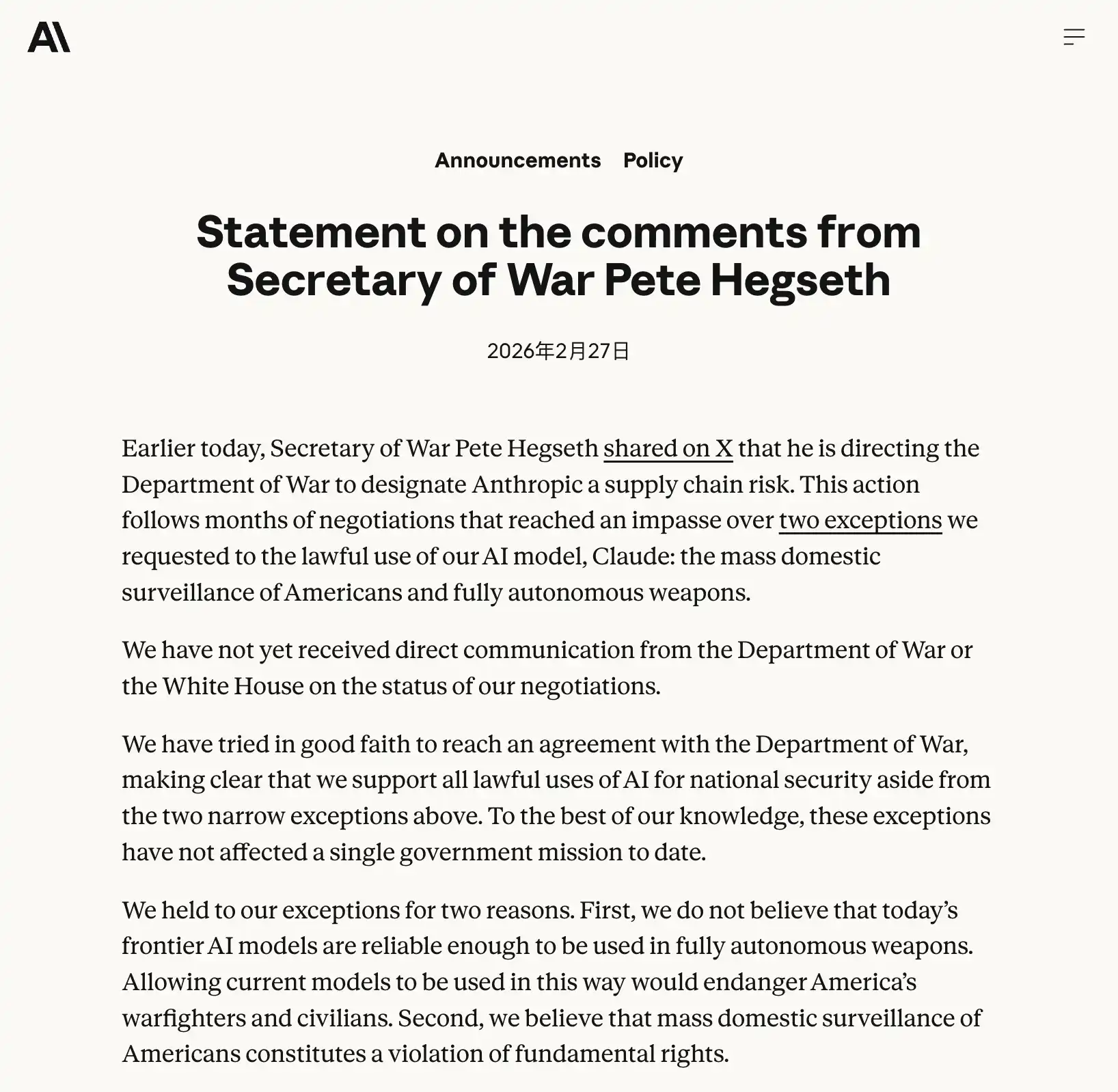

To understand this, you first need to understand what Anthropic's Dario Amodei said in that public letter.

He wrote: "Anthropic understands that military decisions are made by the Pentagon, not private companies. We have never objected to specific military actions, nor have we attempted to temporarily restrict the use of our technology."

Then he took a different tone: "However, in very few cases, we believe AI may undermine rather than defend democratic values. The threat will not change our position: we are conscience-driven and cannot accept their requests."

What does this statement mean in contract language? Anthropic demands to have the principles written into the contract terms, forming a hard constraint. If the other party breaches it, they have the right to refuse further service.

What does the Pentagon hear? A private company telling the government's military: in certain situations, I may choose not to follow your orders, and I define the boundaries.

This is unacceptable to any military. Not because they actually want large-scale surveillance, but because the very question of "who has the authority to decide" is the most sensitive nerve in the military command system. Military acquisition lead Jerry McGinn put it bluntly: military contractors usually have no authority to dictate how the Pentagon can or cannot use their products, "otherwise, every contract would have to discuss specific use cases, which is not practical."

OpenAI gave a completely different answer.

In a memo, Altman told employees that OpenAI would propose to the Pentagon to build its own "safety stack," a multi-layered protection system consisting of technical controls, policy frameworks, and human oversight, embedded between AI models and actual use. OpenAI also mentioned that it could deploy researchers with security clearances into classified networks to monitor AI behavior continuously; models would only be deployed in the cloud, not in edge systems like drones.

In translation: you oversee, you supervise, you see everything that happens. If issues arise, we share responsibility, and you don't come to me for explanations.

"Rules are set in stone, and I execute" versus "I embed, you oversee" are two completely different power dynamics, and the Pentagon only accepts the latter.

What OpenAI Does Best Is Exactly What the Pentagon Wants

There's an uncomfortable irony that needs to be spelled out here.

OpenAI has already practiced the "technical transparency" and "continuous oversight" it promised to the Pentagon on its own users.

In August 2025, OpenAI quietly unveiled a new monitoring mechanism in an official blog post about a user mental health crisis: When the system detects a user "planning to harm others," the conversation is shifted to a dedicated channel overseen by a trained human review team authorized to escalate cases to law enforcement. This was proactively disclosed by OpenAI but buried in a mid-length piece on mental health, met with a muted response.

In February 2026, just before the signing of this contract, OpenAI launched an ad system and updated its privacy policy to make one thing clear: Free and Basic plan users engaging with ChatGPT will undergo "in-session contextual analysis" to show relevant ads based on the conversation topic. For example, if you're discussing recipes, you might see ads for food delivery services. OpenAI emphasizes that the conversation content itself is not shared with advertisers, but the analysis is happening in real-time. The ads began testing on February 9.

In November 2025, OpenAI's third-party analytics provider Mixpanel was breached, exposing some API users' names, emails, approximate location, operating system, and browser information. OpenAI subsequently terminated its relationship with Mixpanel, and lawsuits are pending. The incident primarily impacted API developer users, with regular ChatGPT users affected being those who had submitted help center tickets through the platform.

This is the company that pledged to the Pentagon "technical transparency, continuous oversight, let you see everything happen."

What it does best is let others see in because it's used to treating its users this way.

Anthropic believes rules can constrain users; OpenAI believes embedding its own people is more effective than any terms. The former is idealistic compliance logic, the latter is realistic influence logic. The Pentagon chose the latter because it's more familiar and controllable to them.

What Transpired in the Hours in Between?

Fast forward to the early morning of February 28 Beijing time, 5:01 p.m. on February 27 Eastern Time.

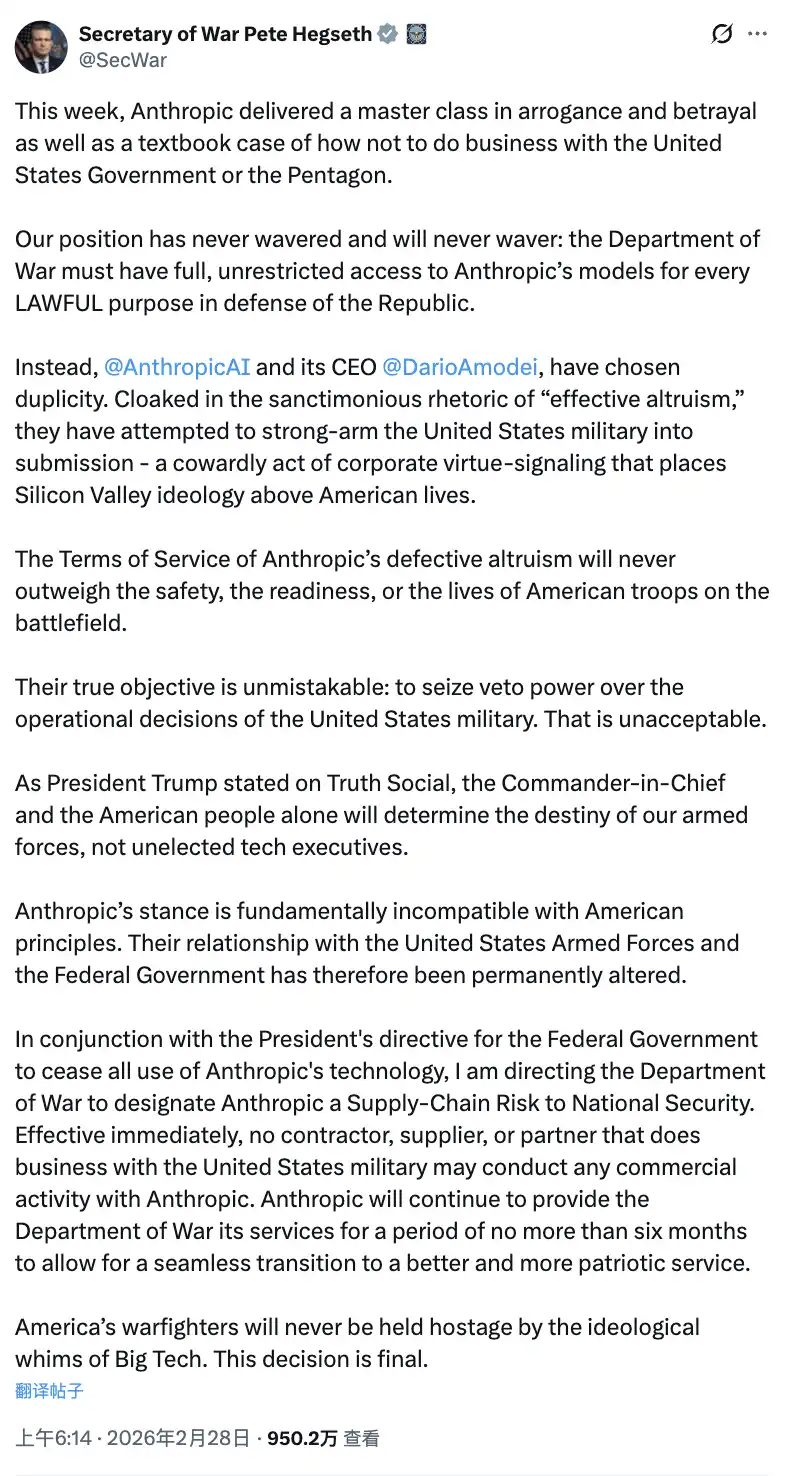

The deadline for Anthropic has arrived. Dario did not compromise. Trump announced a ban on Truth Social, Hegseth categorized Anthropic as a "supply chain risk" on X, and Anthropic declared it would respond through legal means.

A notable statement in Hegseth's declaration was: "Anthropic's position is fundamentally incompatible with American principles." Then, in the same statement, he mentioned that Anthropic could continue to provide services to the Pentagon "for no more than six months to ensure a smooth transition." In other words, they just qualified a company as a national security risk but are still using that company's products. This logical contradiction has not been directly addressed by anyone.

Hours later, Altman posted that tweet.

Reflecting on his earlier remarks at the all-hands meeting that day: he expressed his hope that OpenAI could "help cool the situation" and find a solution that could "set a framework for the entire industry." This was not the tone of someone waiting passively.

This is not the first time Silicon Valley has seen this kind of maneuver.

In 2023, OpenAI's nonprofit board dismissed Altman for being "less than candid," citing his overly rapid pace and communication issues. Five days later, Altman returned with a collective support letter from employees, leading to the dissolution of the board. He then spearheaded the company's transition from a nonprofit structure to a for-profit entity, incorporating the former nonprofit mission into a new legal framework.

This time, it was termed "finding a common framework."

What did Dario lose?

Jerry McGinn, Director of the Center for Government Contracting at the U.S. Strategic Institute, provided a sober assessment of the situation: "This is excellent PR for Anthropic, and they don't even need that $200 million."

Financially, this assessment is accurate. In 2025, Anthropic generated $14 billion in annual revenue with a valuation of $380 billion, and the largest shareholder, Amazon, is unlikely to reconsider its investment logic due to a mere government ban. Legal action could bring a turning point. The IPO valuation is unlikely to suffer significant damage; the narrative of "resisting government pressure, upholding security principles" probably cannot be achieved with any PR budget.

But there was one thing: Dario lost.

The practical use standard of AI in the military domain will be set internally at the Pentagon by OpenAI, not Anthropic. That embedded safety stack within classified networks, those OpenAI researchers holding security clearances, that monitoring system tracking AI behavior in real time. They will, over the next few years, grow into de facto industry norms.

Anthropic held its ground on principle, but lost its seat at the rule-making table.

And the one who took that seat was the same person who, in less than twelve hours, went from "support" to "sign."

The most ironic part: the company that took AI safety most seriously was kicked out of the place that needed AI safety the most.

The company that took its place had just rolled out a mechanism two weeks ago to feed user conversations into the ad system, experienced a third-party data breach three months ago, and earlier quietly disclosed a system for scanning conversations that could report users to law enforcement.

In Silicon Valley, Altman's less-than-twelve-hour move has a name. It's not called betrayal; it's called timing.

You may also like

Morning News | The Hong Kong Securities and Futures Commission announced the regulatory framework for secondary market trading of tokenized investment products; Strategy increased its holdings by 34,164 bitcoins last week; KAIO completed a strategic fi...

What Is an XRP Wallet? The Best Wallets to Store XRP (2026 Updated)

An XRP wallet lets you safely store, send, and receive XRP on the XRP Ledger. Learn what wallets support XRP and discover the best XRP wallets for beginners and long-term holders in 2026.

What are the Top AI Crypto Coins? Render vs. Akash: 5 Gems Solving the 2026 GPU Crisis

What are the best AI crypto coins for the 2026 cycle? Beyond the hype, we analyze top tokens like RNDR, AKT, and FET that provide real-world solutions to the global GPU shortage and the rise of autonomous agents.

What Is a Token in AI? What Is an AI Token + 3 Gems You Can't Miss in 2026

The era of AI hype has transitioned into an era of utility. As we move through Q2 2026, the market is no longer rewarding "narrative-only" projects. At WEEX Research, we are seeing a massive capital rotation into Decentralized Compute (DePIN) and Autonomous Agent coordination layers. This guide analyzes which AI tokens are capturing institutional liquidity and how to spot high-conviction setups in a maturing market.

Consumer-grade Crypto Global Survey: Users, Revenue, and Track Distribution

Prediction Markets Under Bias

Stolen: $290 million, Three Parties Refusing to Acknowledge, Who Should Foot the Bill for the KelpDAO Incident Resolution?

ASTEROID Pumped 10,000x in Three Days, Is Meme Season Back on Ethereum?

ChainCatcher Hong Kong Themed Forum Highlights: Decoding the Growth Engine Under the Integration of Crypto Assets and Smart Economy

Why can this institution still grow by 150% when the scale of leading crypto VCs has shrunk significantly?

Anthropic's $1 trillion, compared to DeepSeek's $100 billion

Geopolitical Risk Persists, Is Bitcoin Becoming a Key Barometer?

Annualized 11.5%, Wall Street Buzzing: Is MicroStrategy's STRC Bitcoin's Savior or Destroyer?

An Obscure Open Source AI Tool Alerted on Kelp DAO's $292 million Bug 12 Days Ago

Mixin has launched USTD-margined perpetual contracts, bringing derivative trading into the chat scene.

The privacy-focused crypto wallet Mixin announced today the launch of its U-based perpetual contract (a derivative priced in USDT). Unlike traditional exchanges, Mixin has taken a new approach by "liberating" derivative trading from isolated matching engines and embedding it into the instant messaging environment.

Users can directly open positions within the app with leverage of up to 200x, while sharing positions, discussing strategies, and copy trading within private communities. Trading, social interaction, and asset management are integrated into the same interface.

Based on its non-custodial architecture, Mixin has eliminated friction from the traditional onboarding process, allowing users to participate in perpetual contract trading without identity verification.

The trading process has been streamlined into five steps:

· Choose the trading asset

· Select long or short

· Input position size and leverage

· Confirm order details

· Confirm and open the position

The interface provides real-time visualization of price, position, and profit and loss (PnL), allowing users to complete trades without switching between multiple modules.

Mixin has directly integrated social features into the derivative trading environment. Users can create private trading communities and interact around real-time positions:

· End-to-end encrypted private groups supporting up to 1024 members

· End-to-end encrypted voice communication

· One-click position sharing

· One-click trade copying

On the execution side, Mixin aggregates liquidity from multiple sources and accesses decentralized protocol and external market liquidity through a unified trading interface.

By combining social interaction with trade execution, Mixin enables users to collaborate, share, and execute trading strategies instantly within the same environment.

Mixin has also introduced a referral incentive system based on trading behavior:

· Users can join with an invite code

· Up to 60% of trading fees as referral rewards

· Incentive mechanism designed for long-term, sustainable earnings

This model aims to drive user-driven network expansion and organic growth.

Mixin's derivative transactions are built on top of its existing self-custody wallet infrastructure, with core features including:

· Separation of transaction account and asset storage

· User full control over assets

· Platform does not custody user funds

· Built-in privacy mechanisms to reduce data exposure

The system aims to strike a balance between transaction efficiency, asset security, and privacy protection.

Against the background of perpetual contracts becoming a mainstream trading tool, Mixin is exploring a different development direction by lowering barriers, enhancing social and privacy attributes.

The platform does not only view transactions as execution actions but positions them as a networked activity: transactions have social attributes, strategies can be shared, and relationships between individuals also become part of the financial system.

Mixin's design is based on a user-initiated, user-controlled model. The platform neither custodies assets nor executes transactions on behalf of users.

This model aligns with a statement issued by the U.S. Securities and Exchange Commission (SEC) on April 13, 2026, titled "Staff Statement on Whether Partial User Interface Used in Preparing Cryptocurrency Securities Transactions May Require Broker-Dealer Registration."

The statement indicates that, under the premise where transactions are entirely initiated and controlled by users, non-custodial service providers that offer neutral interfaces may not need to register as broker-dealers or exchanges.

Mixin is a decentralized, self-custodial privacy wallet designed to provide secure and efficient digital asset management services.

Its core capabilities include:

· Aggregation: integrating multi-chain assets and routing between different transaction paths to simplify user operations

· High liquidity access: connecting to various liquidity sources, including decentralized protocols and external markets

· Decentralization: achieving full user control over assets without relying on custodial intermediaries

· Privacy protection: safeguarding assets and data through MPC, CryptoNote, and end-to-end encrypted communication

Mixin has been in operation for over 8 years, supporting over 40 blockchains and more than 10,000 assets, with a global user base exceeding 10 million and an on-chain self-custodied asset scale of over $1 billion.

$600 million stolen in 20 days, ushering in the era of AI hackers in the crypto world

Vitalik's 2026 Hong Kong Web3 Summit Speech: Ethereum's Ultimate Vision as the "World Computer" and Future Roadmap

On the same day Aave introduced rsETH, why did Spark decide to exit?

Morning News | The Hong Kong Securities and Futures Commission announced the regulatory framework for secondary market trading of tokenized investment products; Strategy increased its holdings by 34,164 bitcoins last week; KAIO completed a strategic fi...

What Is an XRP Wallet? The Best Wallets to Store XRP (2026 Updated)

An XRP wallet lets you safely store, send, and receive XRP on the XRP Ledger. Learn what wallets support XRP and discover the best XRP wallets for beginners and long-term holders in 2026.

What are the Top AI Crypto Coins? Render vs. Akash: 5 Gems Solving the 2026 GPU Crisis

What are the best AI crypto coins for the 2026 cycle? Beyond the hype, we analyze top tokens like RNDR, AKT, and FET that provide real-world solutions to the global GPU shortage and the rise of autonomous agents.

What Is a Token in AI? What Is an AI Token + 3 Gems You Can't Miss in 2026

The era of AI hype has transitioned into an era of utility. As we move through Q2 2026, the market is no longer rewarding "narrative-only" projects. At WEEX Research, we are seeing a massive capital rotation into Decentralized Compute (DePIN) and Autonomous Agent coordination layers. This guide analyzes which AI tokens are capturing institutional liquidity and how to spot high-conviction setups in a maturing market.